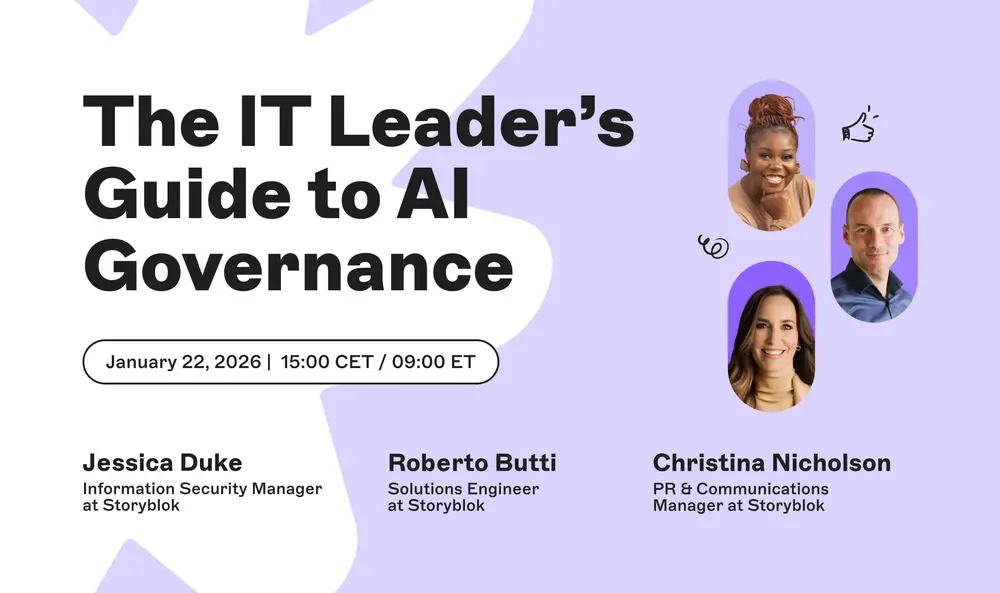

Generative AI is rapidly transitioning from experimental projects to everyday enterprise operations, often outpacing the governance frameworks designed to manage it. As organizations scale their AI initiatives, new challenges around security, data privacy, compliance, and control emerge, forcing leaders to innovate at speed while maintaining trust and transparency. To address these complexities, a fireside panel of IT and communications experts convened on January 22, 2025, to share practical strategies for navigating AI adoption and governance.

The Rapid Adoption of Generative AI

Over the past two years, generative AI has moved from niche experimentation to mainstream business tooling. Enterprises across industries are deploying AI for content generation, customer service automation, code assistance, and data analysis. According to multiple industry surveys, more than 70% of organizations are either piloting or have already integrated generative AI into select workflows. However, this acceleration has not been matched by corresponding governance models. Many companies still rely on ad hoc policies, leaving them vulnerable to data leaks, compliance violations, and reputational damage.

The panel highlighted that speed often wins in competitive environments, but without proper guardrails, the risks multiply. For example, employees might inadvertently share sensitive internal data with public AI models, or produce content that violates copyright. The challenge for IT leaders is to enable innovation without sacrificing security or regulatory adherence. This balancing act requires a shift from reactive policing to proactive governance design.

Why Governance Matters

AI governance encompasses the policies, processes, and technical controls that ensure AI systems are used responsibly, ethically, and in compliance with laws. Key areas include data privacy (GDPR, CCPA), model explainability, bias mitigation, and accountability. The European Union's AI Act, expected to be fully enforced in the coming years, adds another layer of regulatory pressure. Enterprises must demonstrate that their AI use is safe and transparent.

The panelists emphasized that governance is not about stifling innovation but about building trust. When teams have clear guidelines and reliable tools, they can move faster with confidence. For instance, using a headless CMS with API-first architecture allows organizations to manage content centrally and enforce governance rules at the system level, rather than relying on individual compliance. This approach reduces risk while maintaining agility.

Key Insights from the Panel

The fireside chat featured three experts with distinct perspectives: an information security manager, a solutions engineer, and a PR and communications manager. Each brought unique insights into how their domains intersect with AI governance.

The information security manager stressed that the biggest threat is not malicious attacks but unintentional data exposure. Employees often paste proprietary code or customer data into public AI tools without realizing the implications. She advocated for employee training, data classification systems, and AI gateways that can mask sensitive information before it reaches external models. She also noted that security teams must work closely with legal and compliance to ensure policies are practical and enforceable.

The solutions engineer focused on the technical infrastructure required for governed AI. He argued that legacy monolithic content systems are ill-equipped to handle the dynamic nature of AI workflows. Instead, modern API-first platforms allow organizations to build composable architectures where governance layers can be inserted between AI services and data sources. This modularity makes it easier to audit, test, and update AI integrations as regulations evolve. He also highlighted the importance of content modeling: structured, tagged content that can be reused safely across AI applications.

The PR and communications manager addressed the reputational aspects. She explained that trust is built through transparency. Companies that disclose when and how they use AI, and that provide clear disclaimers, are more likely to maintain customer confidence. She also warned against using AI for sensitive communications without human review, as even advanced models can produce tone-deaf or biased language. Her advice was to create internal guidelines for AI-generated content, including mandatory human oversight for any customer-facing material.

Practical Strategies for IT Leaders

The panel offered several actionable takeaways for IT leaders looking to implement AI governance. First, establish a cross-functional AI steering committee that includes security, legal, compliance, IT, and business stakeholders. This group should define acceptable use policies, risk categories, and escalation procedures. Second, invest in content infrastructure that supports governance. A headless CMS with robust permissions, versioning, and metadata capabilities can serve as a single source of truth for AI training data and output. Third, adopt an iterative approach to governance. Rather than trying to build a perfect framework upfront, launch with basic guardrails and refine based on real-world incidents and feedback.

Another key point was the importance of visibility. IT leaders need dashboards that show which AI tools are being used, by whom, and with what data. This monitoring helps detect policy violations early and supports audit readiness. Additionally, organizations should consider implementing AI-specific security tools that can inspect prompts and responses for sensitive content, similar to how traditional DLP (Data Loss Prevention) tools work.

Finally, the panel emphasized culture over controls. No amount of technology will prevent misuse if the organizational culture does not value responsible AI use. Leaders must model ethical behavior, reward transparency, and encourage teams to speak up about potential risks. Training should be continuous and contextual, tailored to different roles such as developers, marketers, and customer support agents.

As AI continues to evolve, so must governance approaches. The panel agreed that perfection is not the goal; instead, progress through iteration and collaboration. By integrating governance into everyday workflows, leveraging modern architectures, and fostering a culture of responsibility, enterprises can harness the power of generative AI while managing its risks. The conversation ended with a reminder that the most successful organizations will be those that treat governance as an enabler, not a barrier.

Source: Helpnetsecurity News